Search Engine Friendly Development: Crawlable Link Structures

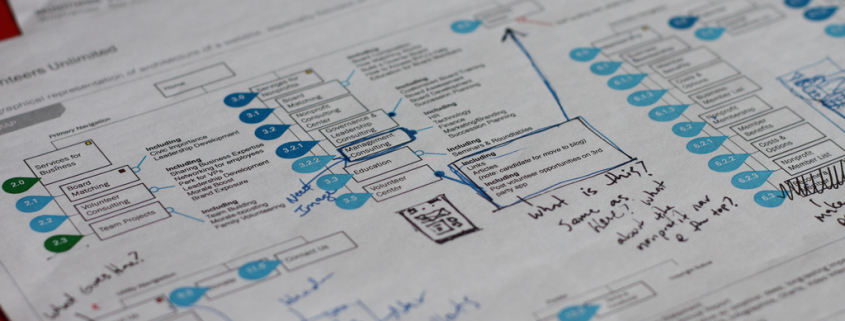

Part 2 of our 8-part series on developing search engine friendly website structures. This was originally written by Rand Fishkin and Moz Staff, and posted on posted Moz. Image courtesy Design Beep.

Just as search engines need to see content in order to list pages in their massive keyword-based indexes, they also need to see links in order to find the content in the first place. A crawlable link structure—one that lets the crawlers browse the pathways of a website—is vital to them finding all of the pages on a website. Hundreds of thousands of sites make the critical mistake of structuring their navigation in ways that search engines cannot access, hindering their ability to get pages listed in the search engines’ indexes.

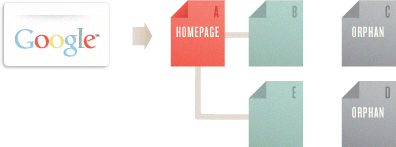

Below, we’ve illustrated how this problem can happen:

In the example above, Google’s crawler has reached page A and sees links to pages B and E. However, even though C and D might be important pages on the site, the crawler has no way to reach them (or even know they exist). This is because no direct, crawlable links point pages C and D. As far as Google can see, they don’t exist! Great content, good keyword targeting, and smart marketing won’t make any difference if the crawlers can’t reach your pages in the first place.

In the example above, Google’s crawler has reached page A and sees links to pages B and E. However, even though C and D might be important pages on the site, the crawler has no way to reach them (or even know they exist). This is because no direct, crawlable links point pages C and D. As far as Google can see, they don’t exist! Great content, good keyword targeting, and smart marketing won’t make any difference if the crawlers can’t reach your pages in the first place.

![]()

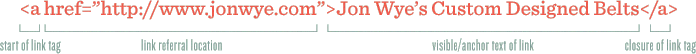

Link tags can contain images, text, or other objects, all of which provide a clickable area on the page that users can engage to move to another page. These links are the original navigational elements of the Internet – known as hyperlinks. In the above illustration, the “<a” tag indicates the start of a link. The link referral location tells the browser (and the search engines) where the link points. In this example, the URL https://www.jonwye.com/ is referenced. Next, the visible portion of the link for visitors, called anchor text in the SEO world, describes the page the link points to. The linked-to page is about custom belts made by Jon Wye, thus the anchor text “Jon Wye’s Custom Designed Belts.” The “</a>” tag closes the link to constrain the linked text between the tags and prevent the link from encompassing other elements on the page.This is the most basic format of a link, and it is eminently understandable to the search engines. The crawlers know that they should add this link to the engines‘ link graph of the web, use it to calculate query-independent variables (like Google’s PageRank), and follow it to index the contents of the referenced page.

Link tags can contain images, text, or other objects, all of which provide a clickable area on the page that users can engage to move to another page. These links are the original navigational elements of the Internet – known as hyperlinks. In the above illustration, the “<a” tag indicates the start of a link. The link referral location tells the browser (and the search engines) where the link points. In this example, the URL https://www.jonwye.com/ is referenced. Next, the visible portion of the link for visitors, called anchor text in the SEO world, describes the page the link points to. The linked-to page is about custom belts made by Jon Wye, thus the anchor text “Jon Wye’s Custom Designed Belts.” The “</a>” tag closes the link to constrain the linked text between the tags and prevent the link from encompassing other elements on the page.This is the most basic format of a link, and it is eminently understandable to the search engines. The crawlers know that they should add this link to the engines‘ link graph of the web, use it to calculate query-independent variables (like Google’s PageRank), and follow it to index the contents of the referenced page.

Submission-required forms

If you require users to complete an online form before accessing certain content, chances are search engines will never see those protected pages. Forms can include a password-protected login or a full-blown survey. In either case, search crawlers generally will not attempt to submit forms, so any content or links that would be accessible via a form are invisible to the engines.

Links in unparseable JavaScript

If you use JavaScript for links, you may find that search engines either do not crawl or give very little weight to the links embedded within. Standard HTML links should replace JavaScript (or accompany it) on any page you’d like crawlers to crawl.

Links pointing to pages blocked by the Meta Robots tag or robots.txt

The Meta Robots tag and the robots.txt file both allow a site owner to restrict crawler access to a page. Just be warned that many a webmaster has unintentionally used these directives as an attempt to block access by rogue bots, only to discover that search engines cease their crawl.

Frames or iframes

Technically, links in both frames and iframes are crawlable, but both present structural issues for the engines in terms of organization and following. Unless you’re an advanced user with a good technical understanding of how search engines index and follow links in frames, it’s best to stay away from them.

Robots don’t use search forms

Although this relates directly to the above warning on forms, it’s such a common problem that it bears mentioning. Some webmasters believe if they place a search box on their site, then engines will be able to find everything that visitors search for. Unfortunately, crawlers don’t perform searches to find content, leaving millions of pages inaccessible and doomed to anonymity until a crawled page links to them.

Links in Flash, Java, and other plug-ins

The links embedded inside the Juggling Panda site (from our above example) are perfect illustrations of this phenomenon. Although dozens of pandas are listed and linked to on the page, no crawler can reach them through the site’s link structure, rendering them invisible to the engines and hidden from users’ search queries.

Links on pages with many hundreds or thousands of links

Search engines will only crawl so many links on a given page. This restriction is necessary to cut down on spam and conserve rankings. Pages with hundreds of links on them are at risk of not getting all of those links crawled and indexed.

![]() Rel=”nofollow” can be used with the following syntax:

Rel=”nofollow” can be used with the following syntax:<a href="http://moz.com" rel="nofollow">Lousy Punks!</a>Links can have lots of attributes. The engines ignore nearly all of them, with the important exception of the rel=”nofollow” attribute. In the example above, adding the rel=”nofollow” attribute to the link tag tells the search engines that the site owners do not want this link to be interpreted as an endorsement of the target page.Nofollow, taken literally, instructs search engines to not follow a link (although some do). The nofollow tag came about as a method to help stop automated blog comment, guest book, and link injection spam (read more about the launch here), but has morphed over time into a way of telling the engines to discount any link value that would ordinarily be passed. Links tagged with nofollow are interpreted slightly differently by each of the engines, but it is clear they do not pass as much weight as normal links.

Are nofollow links bad?

Although they don’t pass as much value as their followed cousins, nofollowed links are a natural part of a diverse link profile. A website with lots of inbound links will accumulate many nofollowed links, and this isn’t a bad thing. In fact, Moz’s Ranking Factors showed that high ranking sites tended to have a higher percentage of inbound nofollow links than lower-ranking sites.

Leave a Reply

Want to join the discussion?Feel free to contribute!